Beyond frictionless KYC: how banks can counter deepfake biometrics

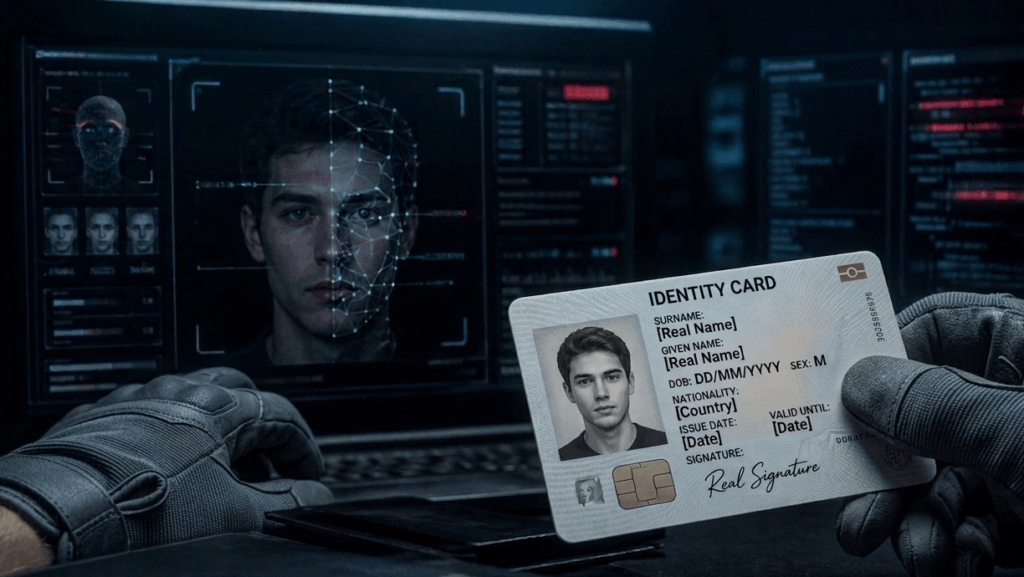

Over the past six months, the fraud pattern of identity theft and deepfake facial manipulation has become difficult to ignore for financial institutions. “Real names, fake faces” appears to be trending.

- In March 2026, the U.S. Internal Revenue Service announced charges in a separate fraud scheme in which synthetic identities were used to open bank accounts under false names and funnel proceeds.

- INTERPOL’s March 2026 global fraud assessment stated member countries are reporting an increase in AI-facilitated synthetic identity fraud.

- Around the same time, a senior adversarial-intelligence leader at TD Bank demonstrated at the RSA Conference how low-cost AI toolkits and webcam plug-ins can be used to bypass live identity checks.

- The World Economic Forum reported in 2026 that face-swapping and camera-injection tools advertised on darknet forums, Telegram channels, and social platforms increasingly claim the ability to bypass digital KYC checks.

- In the Netherlands, police said in December 2025 that a suspect used stolen identity documents and deepfake facial manipulation to open 46 bank accounts in other people’s names.

- In July 2025, Bank of Italy governor Fabio Panetta cited CERTFin data showing that fake current accounts opened online using deepfakes or false identities accounted for roughly 250 cases out of 500,000 new accounts. That may still sound like a small percentage, but it is a clear sign that AI-assisted account– opening fraud is booming.

Banks should default to security-first customer onboarding

This is not just a story about better fraud tools. It is about a design choice. For years, financial institutions have designed onboarding around speed, conversion and convenience. And it is understandable. Customers expect digital ease, making the onboarding experience a competitive edge, and at the same time, banks are under pressure to reduce abandonment and operating cost.

But in high-trust environments, that design choice creates a dangerous trade-off: short-term customer experience gains at the expense of long-term identity assurance.

A bank account is not a streaming subscription. It is a gateway into the financial system. Through it flow salaries, benefits, tax payments, credit, business transactions and, unfortunately, criminal proceeds. When the identity check at that gateway is too weak, the damage does not stop at one fraudulent onboarding. It spreads like a disease: mule-account networks, money laundering, first-party fraud and wider organized crime.

Customer-owned AI Agents are coming

And the challenge is about to become even more complex. Customer AI agents are still a novelty for a niche of nerds, but Gartner predicts that by end of 2029, 25% of total interactions between customers and their banks will be enabled by machine customers. Banks will need to verify more than the document and the individual. They will also need to verify authority.

Was this action initiated by the customer, by a customer-authorized AI agent, or by malicious automation impersonating either one? That raises new questions. What permissions were delegated, for which product, under which controls, and with which audit trail? In other words, the future of onboarding is not just about knowing who the customer is. It is also about knowing who, or what, is acting on that customer’s behalf.

You may think that new digital onboarding solutions are the medicine, but the best verification has strong roots in actual and deterministic identity verification right at the start of the relationship.

Digital-native does not automatically mean digital-only

Now, we know that one of the more persistent assumptions is that younger customers want every interaction to be fully remote. That idea is becoming outdated. Digital-native customers may prefer mobile-first journeys for routine tasks, but Gartner sees a significant rise in preference for human reassurance and in-person contact when the moment carries financial or personal consequence.

That matters here. Opening a bank account, establishing a new financial commitment or handing over the keys to one’s identity is not a low-stakes interaction. For some customers, especially those who grew up in the post-Covid period, a well-designed in-person verification step is not an inconvenience. It is a signal that the institution takes risk, identity and trust seriously.

Strategic friction versus security theatre

Many banks know that frictionlessness is not ideal and creates calculated points of friction. But there is a meaningful difference between strategic friction and the appearance of safety.

True strategic friction – a branch visit, a human handoff, a delayed activation step or an escalation after a failed chip read – can increase assurance, deter abuse and make customers feel appropriately protected. But a lot of calculated friction is mostly cosmetic: a polished remote journey with extra clicks and reassuring language that still rests on weak underlying proof.

Adding performative steps to make people feel safe, is like installing a big lock on a door that still has no wall around it. The goal should not be to make someone feel safe. It should be to make them safe. Trusted onboarding gives enough ease to stay usable, gives enough friction to signal seriousness, and enough assurance to stand up when someone actively tries to break the process.

Why physical documents are the best identity safeguard

A passport or national ID card is not just an image with a name and a face. It is a highly engineered security product. Governments and document manufacturers invest in overt and covert security features that are meant to be inspected through look, tilt and feel, not just through an uploaded image or a short video sequence.

The moment a document is reduced to a photo, a scan or a selfie-based flow, many of its smartest defenses become harder to assess properly, or disappear from the process entirely. What gets verified is no longer the document itself, but a digital representation of it. That is precisely the environment in which deepfakes, synthetic documents and AI-assisted identity fraud thrive.

NFC chip authenticity does not equal identity certainty

Some present NFC chip reading as the answer. It is not. NFC chips are certainly useful, and do raise the bar. When done correctly, chip reading can confirm that the data on the chip is authentic and issuer-signed, making it a meaningful addition to optical checks. But it does not prove that the physical document was legitimately obtained, or that the person presenting it is the rightful holder. Chip authenticity is simply not the same as identity certainty.

Our own review of chip-fraud cases points to a second problem: process design. Fraudsters do not necessarily need to clone a chip to beat an NFC-enabled workflow. In many cases, the real opportunity lies in the exception path. If a chip is absent, damaged or unreadable and the process quietly falls back to weaker checks, the chip has not been defeated. The process has. That is why a non-read should be treated as a risk event and an escalation trigger, not as routine friction to be bypassed.

Remote identification alone should not be the standard

We are not arguing that digital onboarding should disappear. It has real value for accessibility, speed and scale. Device intelligence, session analysis, behavioural signals, NFC and document checks all have a role to play. But they should be treated as layers in an assurance model, not as proof that remote onboarding has become equivalent to physical verification.

For the establishment of a new, high-trust relationship, especially opening a bank account, the original passport or ID card should once again be physically verified as a standard control. Not just for new customers, but maybe also for existing customers moving to higher-value and higher-risk products.

That verification can happen in a branch, through trusted partner locations, or through regional verification points. What matters is the principle: the physical document, the physical person and the transaction context should be assessed together when the stakes are systemic.

Fight identity fraud as a foundational risk

Identity fraud is rarely a standalone offense. More often, it is the entry point for money laundering, mule-account networks, loan fraud and wider organized crime. That is why it should be treated as a foundational risk, not as a marginal inconvenience in the pursuit of frictionless customer onboarding.

If financial institutions truly want to modernize onboarding and get ready for a future with i.e. Customer AI Agents, they should stop asking how to remove every last point of friction. The better question is where trust actually comes from, and which controls deserve to remain physical. Because in high-stakes systems, identity certainty should outweigh customer convenience.

Author: Maickel van Ooijen (Principal Document Expert)